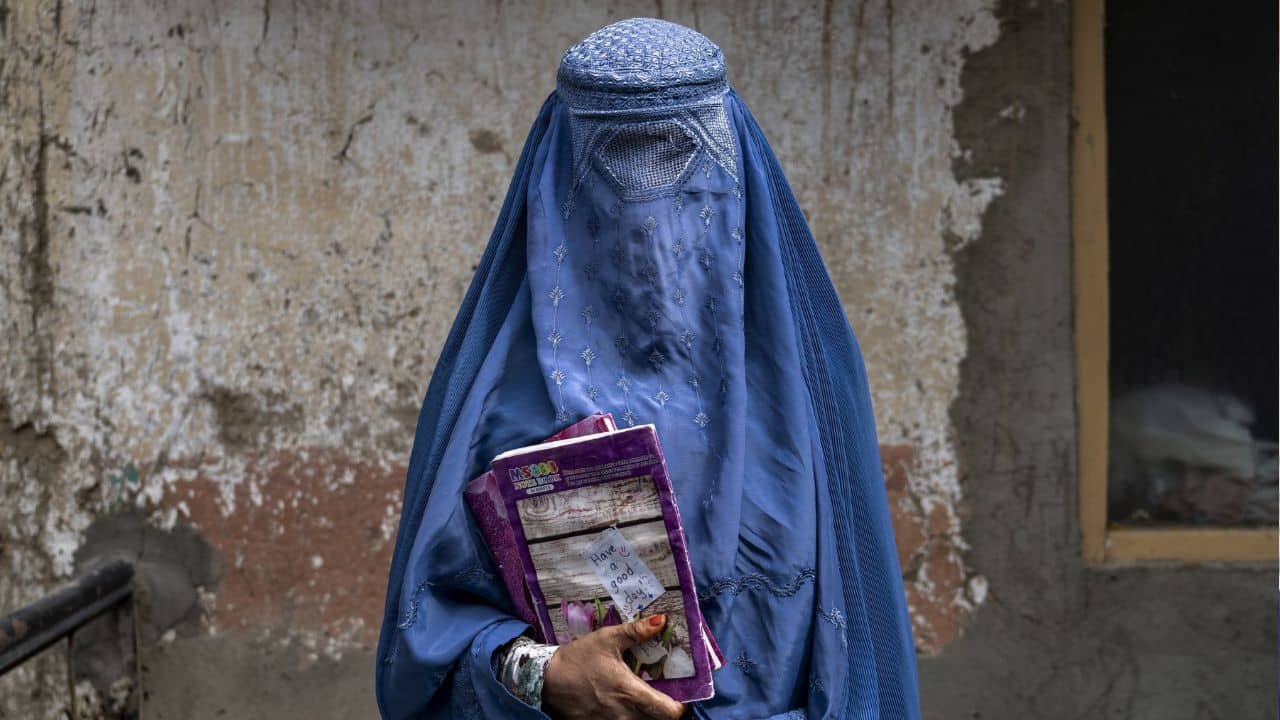

A fabricated audio clip of a US high school principal prompted a torrent of outrage, leaving him battling allegations of racism and anti-Semitism in a case that has sparked new alarm about AI manipulation.

Police charged a disgruntled staff member at the Maryland school with manufacturing the recording that surfaced in January — purportedly of principal Eric Eiswert ranting against Jews and “ungrateful Black kids” — using artificial intelligence.

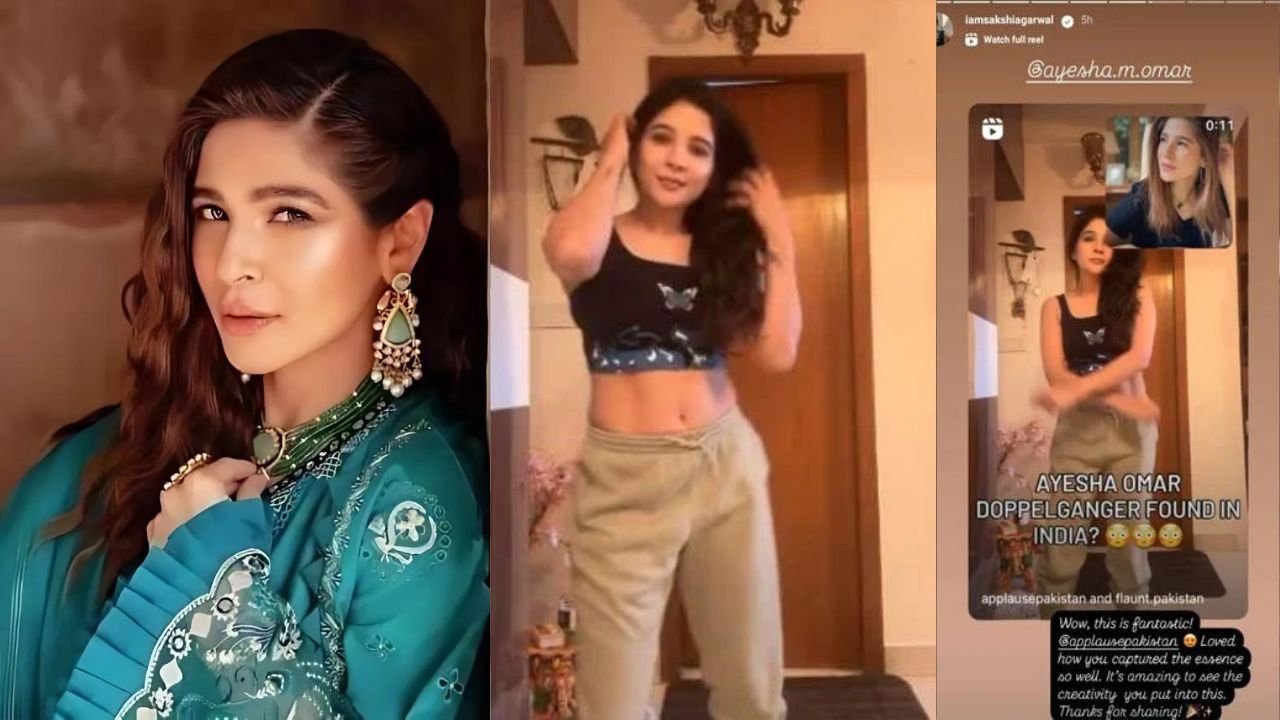

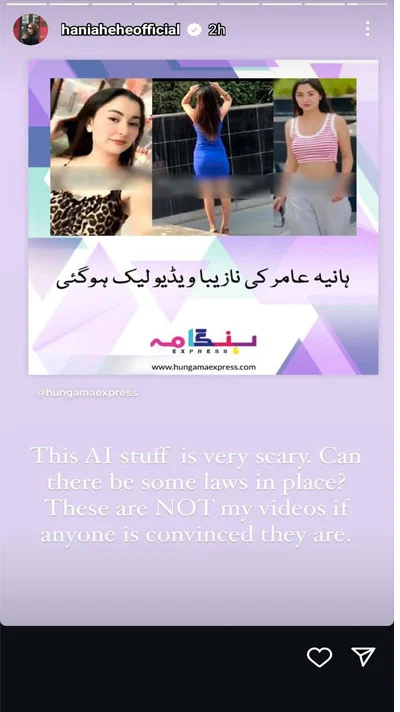

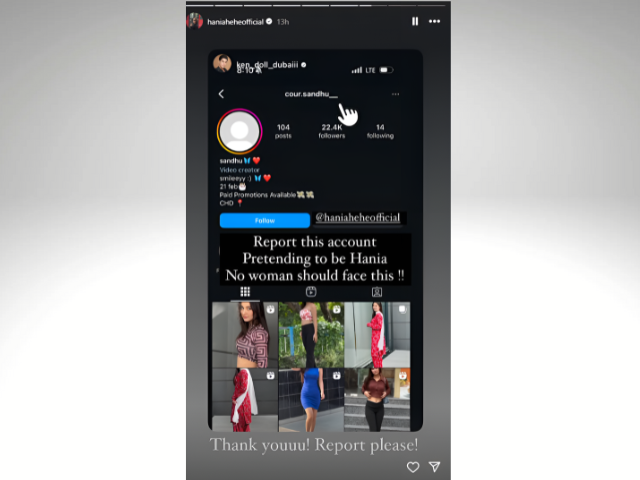

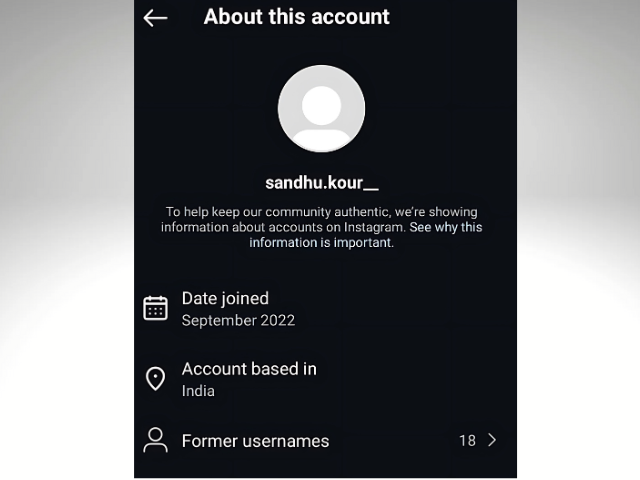

The clip, which left administrators of Pikesville High School fielding a flood of angry calls and threats, underscores the ease with which widely available AI and editing tools can be misused to impersonate celebrities and everyday citizens alike.

In a year of major elections globally, including in the United States, the episode also demonstrates the perils of realistic deepfakes as the law plays catch-up.

“You need one image to put a person into a video, you need 30 seconds of audio to clone somebody’s voice,” Hany Farid, a digital forensics expert at the University of California, Berkeley, told AFP.

“There’s almost nothing you can do unless you hide under a rock.

“The threat vector has gone from the Joe Bidens and the Taylor Swifts of the world to high school principals, 15-year-olds, reporters, lawyers, bosses, grandmothers. Everybody is now vulnerable.”

After the official probe, the school’s athletic director, Dazhon Darien, 31, was arrested late last month over the clip.

Charging documents say staffers at Pikesville High School felt unsafe after the audio emerged. Teachers worried the campus was bugged with recording devices while abusive messages lit up Eiswert’s social media.

The “world would be a better place if you were on the other side of the dirt,” one X user wrote to Eiswert.

Eiswert, who did not respond to AFP’s request for comment, was placed on leave by the school and needed security at his home.

‘Damage’

When the recording hit social media in January, boosted by a popular Instagram account whose posts drew thousands of comments, the crisis thrust the school into the national spotlight.

The audio was amplified by activist DeRay McKesson, who demanded Eiswert’s firing to his nearly one million followers on X. When the charges surfaced, he conceded he had been fooled.

“I continue to be concerned about the damage these actions have caused,” said Billy Burke, executive director of the union representing Eiswert, referring to the recording.

The manipulation comes as multiple US schools have struggled to contain AI-enabled deepfake pornography, leading to harassment of students amid a lack of federal legislation.

Scott Shellenberger, the Baltimore County state’s attorney, said in a press conference the Pikesville incident highlights the need to “bring the law up to date with the technology.”

His office is prosecuting Darien on four charges, including disturbing school activities.

‘A million principals’

Investigators tied the audio to the athletic director in part by connecting him to the email address that initially distributed it.

Police say the alleged smear-job came in retaliation for a probe Eiswert opened in December into whether Darien authorized an illegitimate payment to a coach who was also his roommate.

Darien made searches for AI tools via the school’s network before the audio came out, and he had been using “large language models,” according to the charging documents.

A University of Colorado professor who analyzed the audio for police concluded it “contained traces of AI-generated content with human editing after the fact.”

Investigators also consulted Farid, writing that the California expert found it was “manipulated, and multiple recordings were spliced together using unknown software.”

AI-generated content — and particularly audio, which experts say is particularly difficult to spot — sparked national alarm in January when a fake robocall posing as Biden urged New Hampshire residents not to vote in the state’s primary.

“It impacts everything from entire economies, to democracies, to the high school principal,” Farid said of the technology’s misuse.

Eiswert’s case has been a wake-up call in Pikesville, revealing how disinformation can roil even “a very tight-knit community,” said Parker Bratton, the school’s golf coach.

“There’s one president. There’s a million principals. People are like: ‘What does this mean for me? What are the potential consequences for me when someone just decides they want to end my career?’”

“We’re never going to be able to escape this story.”